How Bricklayer Treats Investigative Knowledge as a System Asset, Not a Prompt Input

In every real security investigation, understanding accumulates. One analyst surfaces a signal. Another adds depth. A third brings in external threat context. Decisions get made based on everything that came before.

That is how a SOC actually works.

Most agentic AI systems do not work that way. They execute tasks in isolation. Each agent starts from scratch, without awareness of what came before. When one task completes, context disappears. The next agent cannot build on prior reasoning. The investigation does not compound. It resets.

This challenge is rooted in the fundamental constraints of Large Language Models. They were not built for persistent, evolving systems. Context windows are limited. As inputs grow, attention dilutes and signal degrades. Model performance is highly sensitive to how information is structured and ordered. In a multi-agent system, these problems compound quickly. The difference between agents doing work and a system that understands its work comes down to context.

With the latest Bricklayer platform release, we are introducing Insights, Insight Groups, and Mentions as part of Multi-Agent Context Engineering (MACE) — our system architecture that defines how investigative context is created, accumulated, and shared across AI agents.

1. Insights: The Atomic Units of Context

When an agent completes a task, what does it actually produce? In most systems, the answer is unstructured text. It may be readable to a human, but it is difficult for another agent to reliably interpret, reuse, or build upon.

Bricklayer changes this with Insights.

Insights are the individual, structured pieces of information generated by an agent while performing a task. They are pointed, specific, and designed for reuse. Rather than burying key findings inside paragraphs, each observation, intermediate result, decision, or recommendation is captured as a discrete unit.

This gives downstream agents precise control over what context they consume. Instead of parsing entire outputs, they can reference exactly what they need: a specific data point, a classification, a derived conclusion, or a confidence score.

This also fundamentally improves traceability. There is no longer a need to sift through large blocks of text to understand where something came from. Every conclusion can be traced back to the exact insight that produced it. That traceability is not just a technical property. It is what allows analysts to trust what the system produces, question it when something looks off, and stand behind the outcomes it informs.

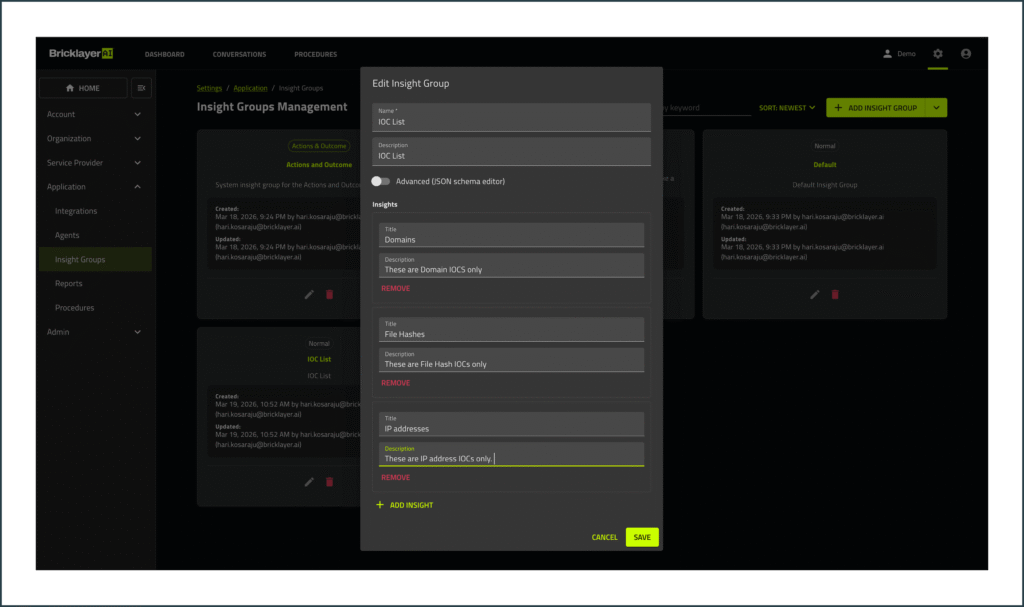

2. Insight Groups: Structuring Insights Into Reusable Knowledge

If Insights are the building blocks, Insight Groups are how they are organized and operationalized.

Each task produces an Insight Group: a structured, task-specific collection of insights that captures the full working context. It includes not just what was found, but what went into it: the inputs considered, the transformations applied, the reasoning followed, and the resulting outputs or recommendations. It can be created for any type of agent-to-agent workflow.

Insight Groups are not just outputs. They are defined artifacts. They turn every task into a building block for the next. For every task, the expected types of insights can be pre-defined, ensuring consistency, standardization, and repeatability across agents and workflows. Over time, this allows agents to produce more consistent, high-quality insights aligned to specific task types.

This mirrors how effective teams operate. When work is handed off between individuals, the most efficient transitions happen when context is clearly structured: what was done, why it was done, and what should happen next. Insight Groups bring that same discipline into an agentic system.

Consider a common SOC workflow: Level 1 alert triage handing off to a Level 2 investigation. In most AI systems today, that handoff is where the context gap becomes evident. The L1 triage agent produces an output, but the L2 agent starts cold, without access to the triage reasoning, the indicators evaluated, or the confidence behind the classification. So L2 ends up re-validating instead of building. Not because nothing was passed, but because what was passed is not usable as a continuation of the investigation. You have multiple agents, but not a system.

With Insight Groups, however, the L2 agent inherits the structured context established by the L1 agent, including the evidence evaluated, the reasoning applied, and the resulting conclusions. From there, it can consume the full group or selectively reference individual Insights depending on the needs of the task. Each layer of the investigation builds on the last instead of reconstructing it. That is the difference between agents doing work and a system that understands its work.

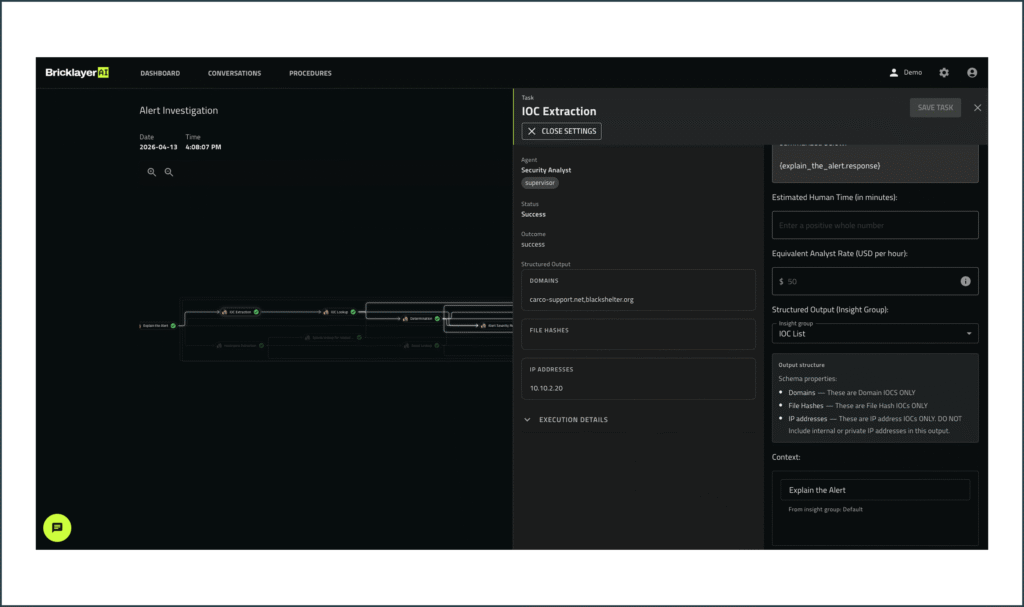

Within any task, the execution details are visible to the analyst, with the ability to click through and view the context received by the agent. Analysts can review the full context behind any conclusion, not just the outcome itself. If something looks wrong, they can rerun part of the workflow without restarting the entire investigation. A flawed step can be corrected at the source rather than quietly cascading forward. That visibility keeps humans in control.

3. Mentions: Human Control Over Context

Insight Groups ensure that investigative knowledge is structured and available. Mentions give humans direct control over exactly what flows into each next step, and what does not.

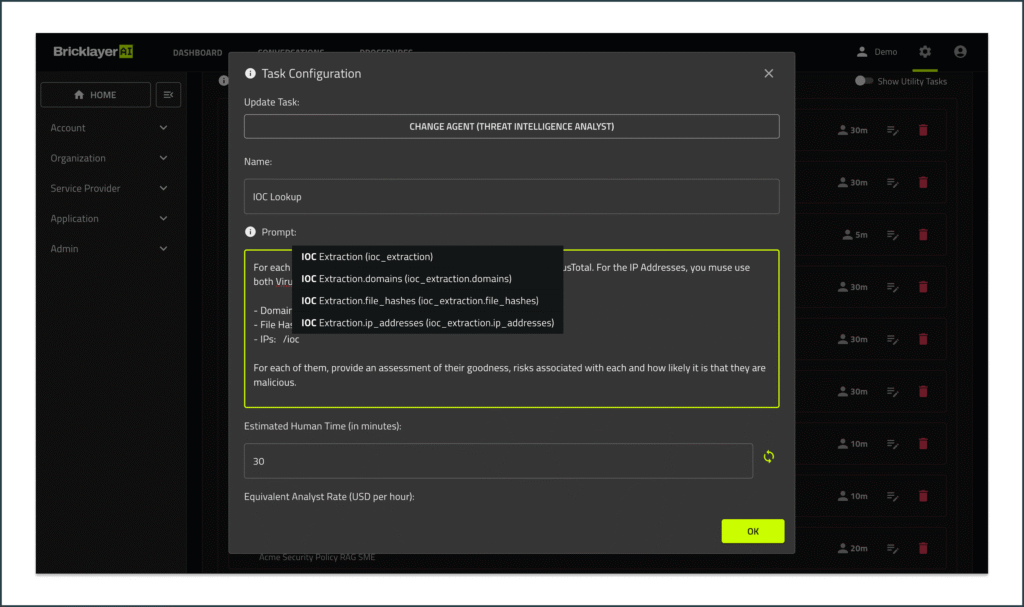

Through Mentions, analysts can map specific pieces of prior context directly into a task prompt. Rather than relying on the system to decide what is relevant, they choose precisely what should carry forward. This matters in several ways.

- Context length. Large investigations generate significant prior output, and bloated prompts dilute reasoning quality. Mentions keep inputs surgical.

- Content sensitivity. Not all context should flow everywhere. Being able to deliberately scope what enters a task is as much a governance benefit as a usability one.

- Prompt preciseness. When an analyst knows that a specific prior finding is directly relevant to the next task, Mentions give them a mechanism to act on that expertise inside the workflow.

In the alert triage workflow, after the L1 agent produces its Insight Group, the analyst can explicitly reference a single relevant Insight into the L2 agent’s task rather than passing everything forward. The L2 agent receives exactly what it needs, the prompt stays clean, and context that is not applicable to this next step does not dilute reasoning or create unintended exposure.

As the investigation deepens, the same precision applies at every handoff. Understanding accumulates. Noise does not.

The same precision extends to reporting. Specific insights can be mapped to the sections of a report where they are most relevant, rather than producing undifferentiated summaries that leave analysts to do the organizational work themselves.

Context, Coordination, and Control: The Complete Picture

Context is one of three pillars in Bricklayer’s platform. The others are Coordination, delivered through the Bricklayer Workbench where human analysts and AI agents collaborate in a shared investigative environment, and Control, which provides enterprise governance and auditability. These pillars are tightly connected.

Coordination works when agents share understanding. Control works when that understanding is visible and traceable. Context is what makes both possible. Without it, the Workbench becomes a view into disconnected activity. Governance has nothing meaningful to enforce. With it, agents operate as a system and humans remain accountable for every outcome.

This is what separates a coordinated Agentic SOC from the fragmented approaches many teams are experimenting with today. Tool-specific agents and isolated automation can solve individual problems. Without shared context, structured coordination, and enterprise-grade governance operating together, they produce more complexity rather than reducing it. The difference is not any single capability. It is whether the system can accumulate understanding, act on it coherently, and keep humans in charge, all at once.

From Experimentation to Real Operations

The shift underway is not about adding more AI into the SOC. It is about making AI behave the way the SOC already operates: as a way to scale the team while reducing risk exposure.

That requires more than capable agents. It requires a way to preserve understanding, share it across workflows, and make it available to the humans responsible for every decision. That is what Bricklayer’s agentic cybersecurity platform delivers. Faster resolution. Higher accuracy. Reduced noise. And genuine collaboration between analysts and agents, where the system does not just do the work, but understands it.

That understanding is what transforms AI from an experiment into part of the operating model. Coordinated autonomy, with humans firmly in charge.

If you are ready to see what that looks like in your environment, schedule a demo.