In every SOC, one thing remains constant: the human analyst is accountable for the outcome.

AI can accelerate investigations. It can surface insights faster than any team. But the responsibility to make the right decision, and to stand behind it, still sits with people.

That’s where most AI approaches in security operations fall short. They focus on automation, not accountability. They execute tasks, but don’t make it easy for analysts to understand, challenge, or change what’s happening.

To be usable in real environments, AI has to operate differently. It has to work in a way that keeps humans in control from the start.

That’s the thinking behind the Bricklayer Workbench.

From Execution to Collaboration

Many AI systems in the SOC behave like background processes. You give them an input, they return an output, and somewhere in between important decisions are made out of view.

That might be acceptable for low-risk tasks. It doesn’t hold up in enterprise security operations, where every conclusion can have real consequences.

Analysts need to understand how an investigation has unfolded. They need to step in when something doesn’t look right. And they need the ability to adjust the direction of the work based on their own judgment and expertise.

This is less about automation and more about coordination.

The Workbench introduces a model where AI agents and human analysts operate in the same environment, giving analysts the visibility and control needed to make decisions with confidence.

1. The Bricklayer Workbench: A Shared Space Where Humans and AI Agents Work Together

The Workbench is built around a simple idea: humans and AI agents should work side by side.

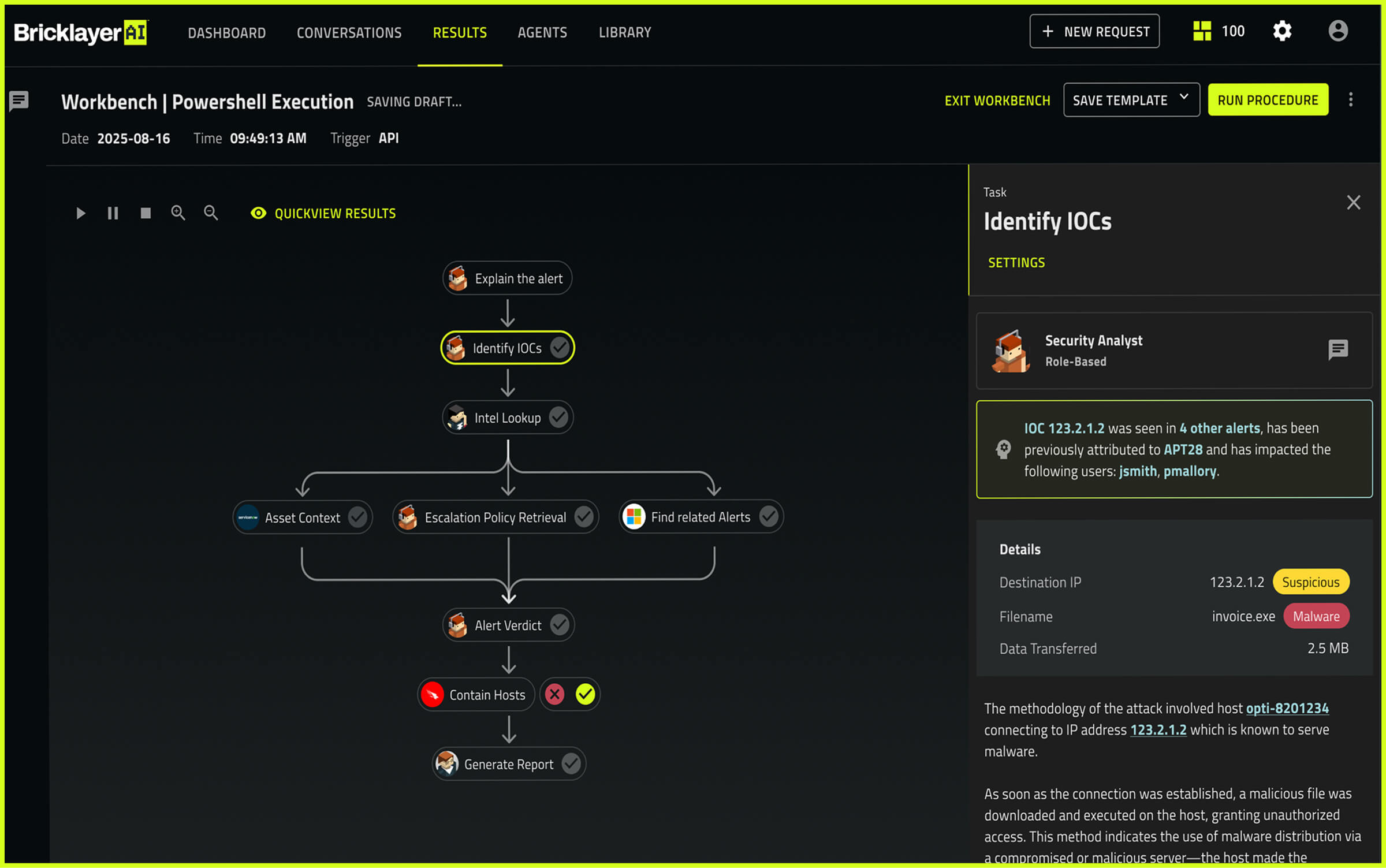

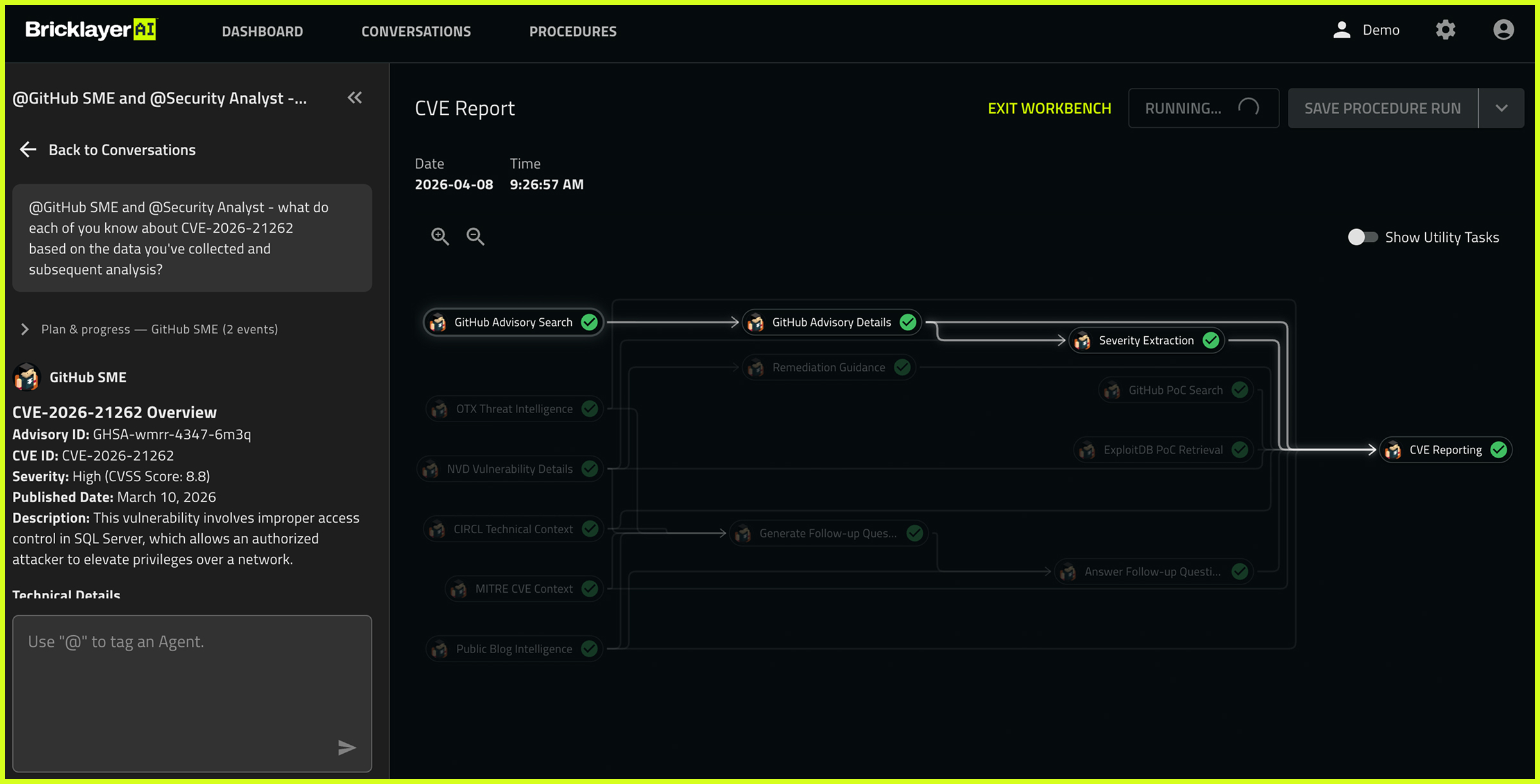

In practice, that means analysts are active participants throughout an investigation, not just recipients of a final output. They can verify what agents have done, drill into tasks to see which agents were involved, and understand what data and context informed each step. If something doesn’t look right, they can correct a flawed step or ask for specific tasks to be redone without restarting everything. They can also step in while work is in progress and push for deeper analysis in areas that warrant more scrutiny. The level of involvement stays in the analyst’s hands.

This reflects how good investigative work actually happens. It is iterative. It involves judgment calls, course corrections, and moments where a human needs to override what a system has concluded. The Workbench is designed to support all of that, not just the clean linear path.

What makes this possible is that agents and analysts share the same working environment. Findings are visible as they develop. Context is carried throughout. When an analyst redirects the investigation, agents pick up from that point with the full picture intact. Nothing is lost, and the work doesn’t have to start from scratch.

The result is a model where AI contributes speed and scale, and humans contribute judgment and accountability. Neither operates in isolation. Both are working toward the same outcome, at the same workbench.

2. Transparent Execution: Making AI Decisions Understandable

If analysts cannot see how a conclusion was reached, they cannot confidently act on it.

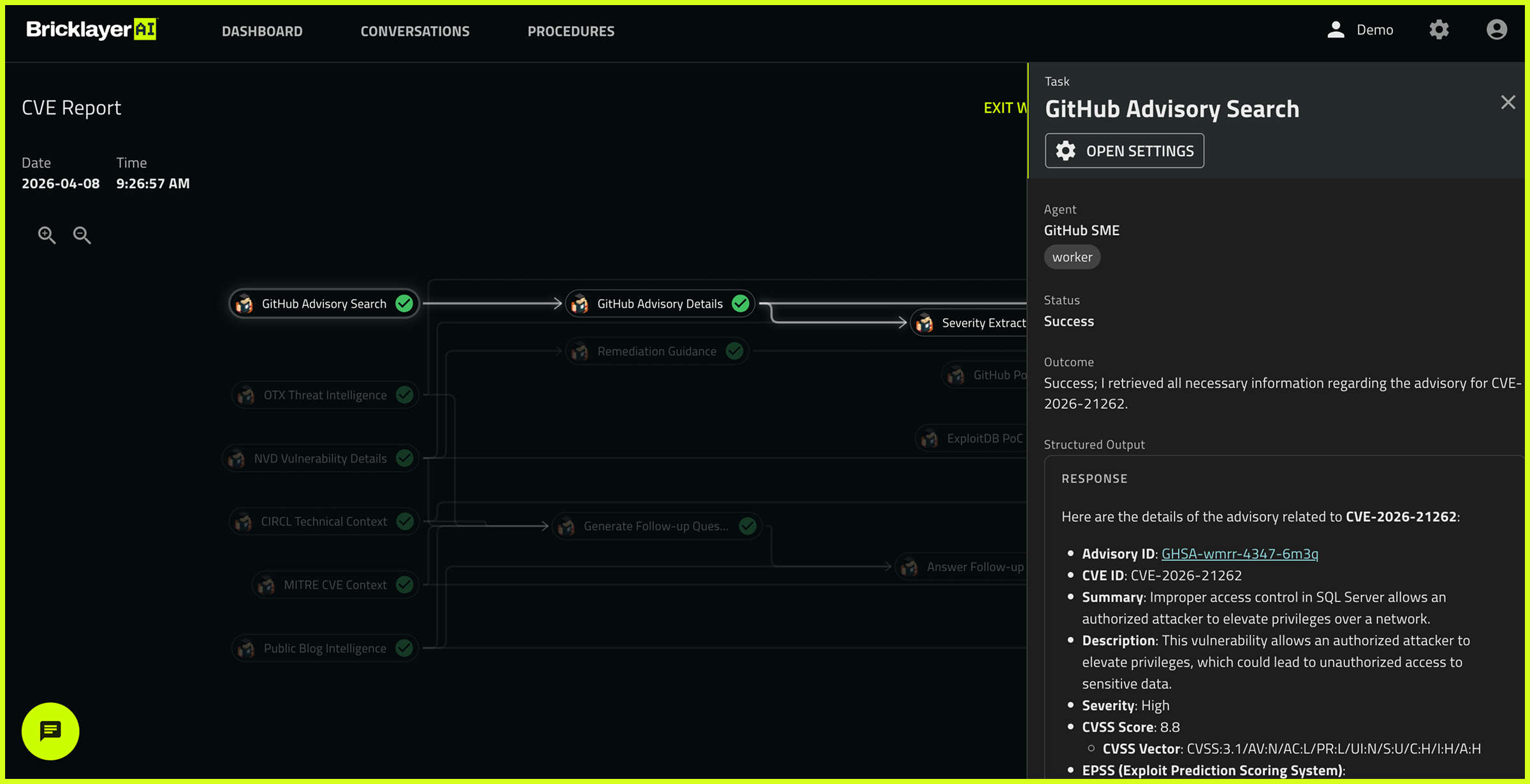

The Workbench addresses this by making every step of an investigation understandable, not just the conclusion.

Each investigation is broken into clear tasks so analysts can follow what agents are doing in sequence. Instead of a single output, there is a structured process that can be inspected and questioned at any point. Analysts can see the inputs that started the investigation, the external data that was pulled in, prior outputs generated along the way, and any internal organizational documents that shaped the result. If something looks off, they can quickly trace it back to the source.

The Workbench also surfaces how tasks relate to each other. Investigations often depend on earlier findings, and a mistake early on can compound into larger problems. Visualizing those dependencies gives analysts a clear picture of where to intervene to correct course without disrupting the rest of the work.

This level of transparency turns AI from something that produces answers into something that can be reasoned about. Analysts are not asked to trust the system. They are given the information needed to validate it.

3. Group and Direct Conversations: Working With Agents in Context

In a real SOC, investigations are collaborative. Analysts ask questions, challenge each other, and refine their thinking as new information comes in.

The Workbench brings that same dynamic into human and AI interaction.

Analysts can engage directly with subject matter experts and role-based agents during an investigation. They can ask why a conclusion was reached, request additional analysis, or push the system to explore a different angle. These are not one-off prompts. They are ongoing conversations that stay tied to the context of the investigation.

Group conversations extend this further. Multiple agents working on different parts of a problem can be engaged at once, alongside the analyst. This creates a shared workspace where findings are discussed, refined, and acted on as the investigation progresses.

This matters because it reflects how investigations actually get done. Decisions are rarely made in isolation. They are the result of discussion, iteration, and validation.

By enabling that same process with AI agents, the Workbench makes them usable as part of a team, not just as tools.

Why This Matters Now

AI in the SOC is moving quickly, but adoption at scale depends on more than capability.

Security leaders need to know that:

- Human analysts can step in and take control at any point

- Decisions can be explained and defended

- Workflows can adapt to new information without starting over

Without these, AI introduces risk and friction instead of reducing it.

The Workbench addresses this directly. It gives analysts a way to stay deeply involved, to understand what’s happening, and to guide the outcome. That’s what makes AI operationally viable in enterprise security operations.

Coordination as the Foundation

Security teams don’t need more isolated automation and siloed AI agents. They need systems that bring together people, agents, and context in a way that works under real conditions.

The Workbench, as part of Bricklayer’s agentic cybersecurity platform, does exactly that.

It creates a shared environment where investigations are visible, interactive, and controlled by the people responsible for the outcome. AI contributes speed and scale, but the human remains the ultimate decision-maker.

That balance is what makes agentic AI usable in the enterprise SOC.

And it’s what turns AI from an experiment into part of the operating model.

If you’re looking to scale SOC operations with AI agents and workflows that operate as a natural extension of your team, schedule a demo to see Bricklayer in action.